TL;DR: Prism is a new option in the Augment model picker that routes each user turn to whichever underlying model best fits the work. For a team sending 10,000 user requests per month, Prism translates to roughly $20,000 in monthly savings, or up to 30% lower cost, with a negligible quality difference compared to the frontier reasoning models it routes between. Select Prism in the picker to get frontier-model quality at lower cost.

How Prism performs vs. frontier reasoning models

Prism matches the best individual model in our comparison on quality, while costing about 20-30% less per task than frontier reasoning models. This data is based on our internal multi-turn coding benchmark, which better emulates real sessions with tasks of varying complexity than benchmarks like SWE bench and Terminal bench. (More on our methodology below).

Two Prism configurations appear below: one tuned to match Opus 4.7's quality envelope at lower cost; and one aimed at matching GPT 5.5.

We know from our customers that developers and teams have strong preferences for different model families: with Prism, you can stay in the model family you like, at lower cost.

| Variant | Target | Routes between |

|---|---|---|

| Prism (Claude + Gemini) | Opus 4.7 | Opus 4.7, Sonnet 4.6, Gemini Flash 3.0 |

| Prism (GPT + Kimi) | GPT 5.5 | GPT 5.5, GPT 5.4, Kimi K2.6 |

Each Prism configuration delivers the same quality range and lower cost per task as the model it's tuned to match.

| Model | Avg overall score (80% CI) | Cost per task |

|---|---|---|

| Prism (GPT + Kimi) | +0.30 ± 0.14 | $5.25 |

| GPT 5.5 | +0.21 ± 0.05 | $7.31 |

| Prism (Claude + Gemini) | +0.11 ± 0.08 | $4.91 |

| Opus 4.7 | +0.08 ± 0.10 | $6.81 |

| Sonnet 4.6 | −0.11 ± 0.05 | $3.67 |

| Kimi K2.6 | −0.23 ± 0.09 | $3.32 |

| Opus 4.6 | −0.37 ± 0.19 | $5.16 |

Each variant hits its design target on quality and is cheaper than the corresponding manual pick. That's the result a router should produce.

A few things worth flagging about the methodology. One repository is a narrow benchmark, and a model especially good or bad at idiomatic Go (what our internal benchmark uses) will look better or worse here than across a broader suite. The judge is itself an LLM. We've validated it correlates with human review at the bench level, but on any single task it can disagree with a human reviewer. Cost is metered at the API level including prompt-cache reads and writes, which is the right thing to measure for end-to-end production cost.

Methodology:

The benchmark is built from historical PRs on a large Go repo, converted into synthetic multi-message developer conversations that span a range of turn difficulties, from setup work through to the harder turns that actually move the change forward. Each task gives a router something to route over, rather than presenting one uniformly hard prompt the way single-task benchmarks do. Each task starts the agent at the PR's base commit and queues the full conversation in one session: the agent navigates the codebase, edits files, runs tools, and produces a final diff.

An LLM judge model then scores that diff against the original PR on correctness, completeness, code reuse, best practices, and unsolicited documentation, returning an aggregate score in [-1, 1]. Positive means the judge preferred the agent's diff; negative means it preferred the human PR. We averaged each model across multiple runs to dampen judge noise.

Why we built Prism

No single model wins, so Augment gives you access to the industry leaders. The picker is how we make that real. It's also a source of friction: which model for this task?

We looked at recent IDE agent traffic and found something we didn't expect: the top 10% of user turns consumed about 57% of all LLM rounds inside the agent loop. Most turns were lighter work on average. And every one of them was billed at frontier rates, because that's the model the user picked at the start of the session.

This is rational behavior. Picking the right model for each turn is hard, and getting it wrong is expensive in both directions. Pick frontier and you pay frontier prices to run a test suite. Pick cheap and the model thrashes on a problem the bigger one would have solved in a single pass. Switching mid-task is worse, because switching evicts the prompt cache and the next turn pays roughly 10x what a cache hit costs. So most users settle on the biggest model and stop touching the picker.

As agent usage has scaled across engineering teams, that default has become a real line item. Engineering leaders are asking sharper questions about it: do we need the best model for every task? Can we hold quality and bring spend down? Prism is our answer.

How Prism maintains cache and complexity aware routing

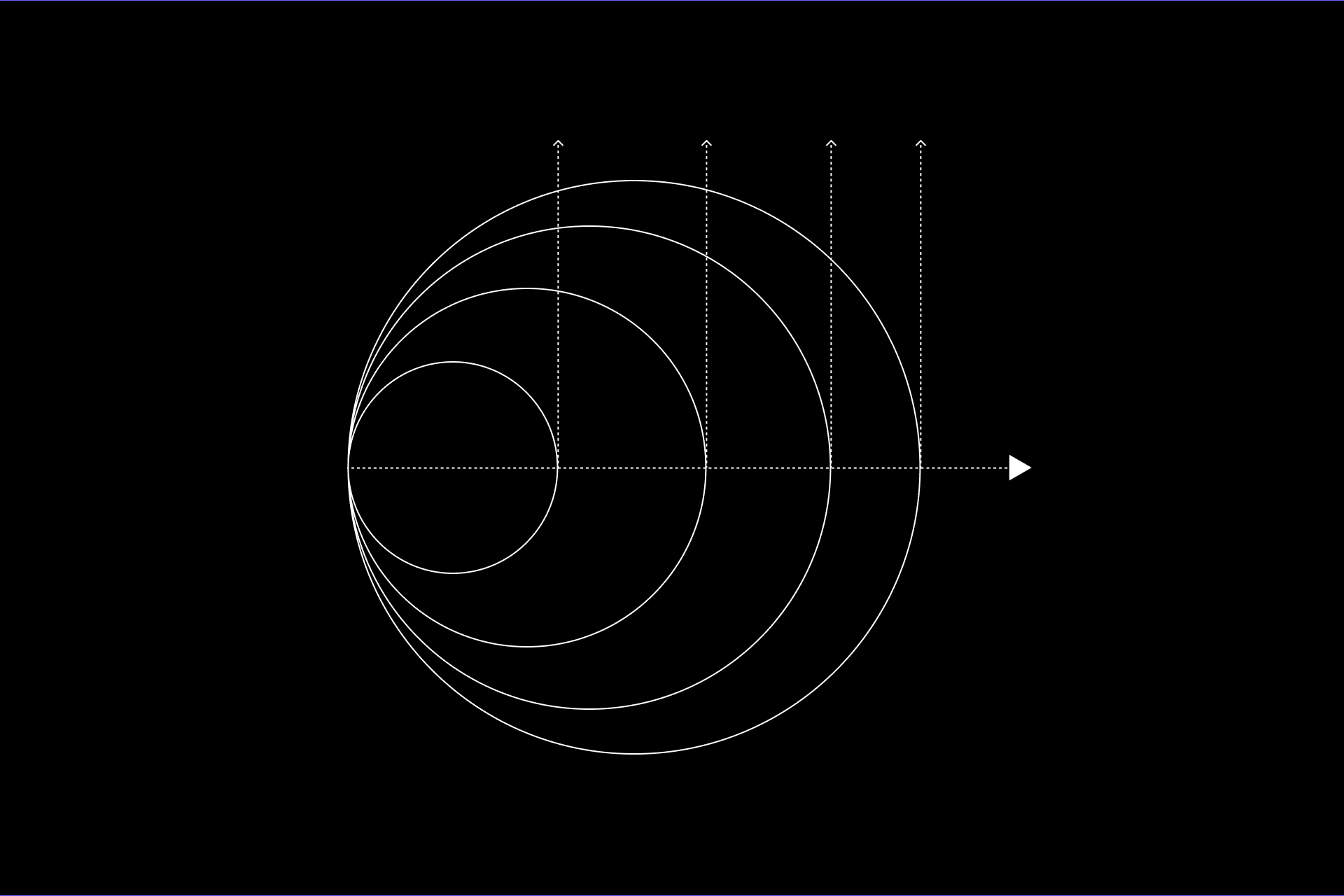

Prism is a planner on top of a pool of underlying models. Before each user turn, a small and fast planner model reads the request and decides which of the underlying models should handle it. From the outside Prism behaves like any other model in the picker. You pick Prism, Prism picks the model.

The hard part isn't picking. It's switching. Modern coding agents lean heavily on prompt caching to keep cost and latency tolerable on long sessions, and switching models mid-conversation throws the cache away. That's the same ~10× hit from the intro, paid every turn the router changes its mind. A naive router that re-routes every turn would burn most of its potential savings on cache misses, and could easily end up more expensive than just staying on one model.

Prism's job is to switch only when the expected win from a different model exceeds the cost of the cache eviction. In practice that means the planner doesn't get to override an in-progress turn, the routing decision is sticky across the agent's tool-call follow-ups within a turn, and when a switch does happen we manage the context handed to the new model so the cost of the switch stays bounded.

Prism's planner picks an underlying model for each user turn, with a cache-aware step that switches only when the gain outweighs the cost of evicting the prompt cache.

Additional Evaluations

Terminal Bench 2.0

Single-task benchmarks like this one are the worst case for a router. Most tasks are hard enough that the optimal policy is "always use the strongest model," which leaves a router very little room to win on quality. The best it can do is not lose.

| Model | Pass Rate | Cost per task |

|---|---|---|

| Prism (GPT + Kimi) | 75.7% | $0.68 |

| GPT 5.5 | 76.0% | $0.82 |

| Gemini 3.1 | 67.6% | $0.64 |

| GPT 5.4 | 66.5% | $0.49 |

| Opus 4.7 | 64.0% | $0.85 |

| Prism (Claude + Gemini) | 64.0% | $0.89 |

Both Prism variants land within a fraction of a point of the model they're tuned to match: Prism (GPT + Kimi) vs. GPT 5.5 (within 0.3pp), Prism (Claude + Gemini) vs. Opus 4.7 (exactly tied). No obvious quality regression from routing in either case, on a workload where any regression would be most likely to show.

Cost is more interesting. Prism (GPT + Kimi) runs ~17% cheaper per task than GPT 5.5 and ~20% cheaper than Opus 4.7. The routing wins still show up.

Prism (Claude + Gemini) ends up slightly more expensive per task than Opus 4.7, and the cause isn't planner overhead (the planner is a cheap model and a small fraction of total cost). It's that some of the cheaper models in this pool needed more tokens to finish the same task, eating their own cost advantage. A "cheaper" model isn't cheaper if it grinds for twice as many turns to land in the same place.

SWE-Bench Pro

We ran the same two Prism variants on SWE-Bench Pro (731 instances) — another single-task benchmark, and an even more extreme version of the "worst case for routers" caveat than Terminal Bench. Terminal Bench has a small tail of easier tasks where a cheaper model is the right call; SWE-Bench Pro is uniformly hard, which means the optimal policy really is "always use the strongest model" and a router has nowhere to win on quality at all.

| Model | Pass Rate | Cost per instance |

|---|---|---|

| Opus 4.7 | 61.8% | $1.98 |

| Prism (Claude + Gemini) | 59.5% | $1.85 |

| GPT 5.5 | 53.6% | $2.15 |

| Prism (GPT + Kimi) | 52.9% | $1.88 |

The interesting result is that even here, both variants stay close to their targets. Prism (GPT + Kimi) lands within 0.7pp of GPT 5.5 on quality and is ~12% cheaper per instance. Prism (Claude + Gemini) lands within 2.3pp of Opus 4.7 and is ~7% cheaper per instance.

On a workload where a router has structurally no room to win on quality because every task is hard, the optimal policy really is "always use the strongest model." Even in this case, both variants come in within a couple of points of the model they're tuned to match, at lower cost per instance. The cost advantage is smaller than on our internal multi-turn benchmarks, the quality gap is wider than on Terminal Bench, and that's the right shape: as the workload moves further into "always use the strongest model" territory, the routing gain compresses, but it doesn't invert. Routing holds up even in circumstances where gains are the hardest to make.

Planner overhead

The planner is an extra LLM call before the routed model starts streaming, which costs both money and latency. Both numbers turn out to be small relative to the work the routed model is doing, but the latency one is more interesting.

On cost: across the runs that produced the table above, the planner accounts for $0.91 of $2,649 in total spend, or about 0.03%. Per Prism run of 25 tasks, the planner contributes between $0.14 and $0.17, roughly 0.10 to 0.14% of that run's total cost. For context, conversation compression is about ten times larger at 0.4%. The planner itself isn't a meaningful cost driver.

On latency: Over the last week of production traffic, the planner call ran in about 2.6 seconds at the mean (p50 2.6s, p90 4.0s, p99 5.4s) on the turns where it fires. Prism caches the routing decision per conversation and reuses it on subsequent tool-result turns inside the same agent loop, because there's no point re-classifying the task while the agent is mid-execution on its own tool calls. The planner ran on about 4% of all chat-host turns in that window. The other 96% were tool-result follow-ups that reused the cached decision and paid no planner overhead. Across every turn the chat host served, the planner accounts for roughly 3% of total request time.

The per-turn framing is the one users feel. On a turn where the planner runs, the median latency for Prism (GPT + Kimi) is around 6 seconds, and the planner is about 2.6 of those seconds. That's 30 to 40% of user-perceived latency on the turns where the user is waiting for a reply to a new message. For long agent sessions where the routed model is going to spend tens of seconds working anyway, that latency is less noticeable. For short interactive turns where the model would have replied in 4 to 5 seconds on its own, it's a felt cost.

What we're still tuning

Three things we know about and plan to address:

- Surfacing the routed-to model for power users. Prism hides the underlying choice by design. But for power users, and for our own debugging, we'll add a way to surface which model handled a turn without changing the default experience.

- You can't constrain the routing pool. Prism's set of underlying models is fixed today. If you'd rather it never use a particular model, or only route between a subset, there's no way to express that yet. We'll add a way to scope the pool.

- Cutting the planner's added latency. The planner sits in front of the routed model, so its ~2.6s shows up as time-to-first-token on the turns where it runs. We have a few directions in flight to bring that down.

- Control over cost vs. quality. Some users may want explicit control over the cost-quality trade-off. We’re investigating a "prefer cheap" or "prefer best" knob for users and teams who want to control those choices.

Try Prism today

You can choose the Prism models in your model picker today:

Billing rolls up under a single Prism line item. The underlying model that handled any given turn isn't surfaced. The point is to stop making you think about it.

Try Prism in your next agent session and let us know how it feels. We will continue to find new ways to help customers understand and optimize the cost/quality tradeoffs in agentic engineering.

Written by

John Mu

Member of Technical Staff

John Mu is a member of technical staff at Augment Code, where he works on the routing and infrastructure behind Augment's coding agents. He spent the last three years at Stripe, most recently leading the team building defenses against advanced attacks. He holds a PhD in electrical engineering from Stanford.